Test an autograder locally by installing its runtime, running sample submissions, and validating outputs.

I have built and tested autograders for university courses and bootcamps. This guide explains how to test the autograder in laptop step by step, from preparing your environment to reproducing student issues and automating checks. You will learn practical setups, sample tests, debugging tips, and real-world lessons that save time and prevent grading errors. Read on to get a clear, hands-on path to reliable local autograder testing.

Why test an autograder on a laptop

Testing the autograder in laptop catches errors early. A local test gives quick feedback and avoids breaking a shared grading server. It is cheaper and safer than running tests on a remote system for every change.

Testing locally also helps you reproduce student failures. You can iterate fast, add logs, and experiment with fixes. This reduces surprises during grading weeks and makes your autograder more robust.

Source: practicetestgeeks.com

Prepare your laptop environment

Start with a clean, repeatable environment for how to test autograder in laptop. Use a virtual environment or container to match the grader runtime. Keep tools and libraries pinned to known versions.

Steps to prepare:

- Install a package manager like Homebrew, apt, or Chocolatey for consistent installs.

- Create a Python virtual environment or use Docker to mirror the grader base image.

- Install testing frameworks used by your autograder, such as pytest, unittest, or custom runners.

- Add linters and formatters to validate teacher-provided code before grading.

Tips:

- Use Docker if the autograder depends on system packages or exact OS behavior.

- Save environment files (requirements.txt, Pipfile, Dockerfile) so others can reproduce your setup.

Source: zybooks.com

Core testing strategies

Testing strategies make how to test the autograder in laptop clear and repeatable. Apply unit tests, integration tests, and end-to-end runs to cover all layers.

Key strategies:

- Unit test your grading functions with isolated inputs.

- Integration test by running the full grading pipeline on sample submissions.

- End-to-end test by simulating student submissions and verifying final scores and feedback.

PAA-style quick questions:

How do I simulate multiple student submissions?

Create sample folders or zip files that match the submission format, then run them through the grader in a loop to validate batch behavior.

Can I test the autograder without Docker?

Yes. Use virtual environments and install all dependencies, but ensure OS-level differences are understood and tested if needed.

How often should I run full tests?

Run quick unit tests on every change and full integration tests before deployment or large grading runs.

Source: codegrade.com

Creating test cases and fixtures

Good test cases show both expected behavior and failure modes. Build a library of sample student submissions to exercise tricky cases.

What to include in test cases:

- Correct solutions covering typical inputs.

- Edge cases that break naive code (empty input, large files, invalid types).

- Malicious or malformed submissions to test sandboxing and security.

- Partial-credit scenarios and style-only differences.

Organize fixtures:

- Use a folder per test case with descriptive names.

- Store expected outputs and any reference data.

- Version tests with your grader so changes stay traceable.

Source: zybooks.com

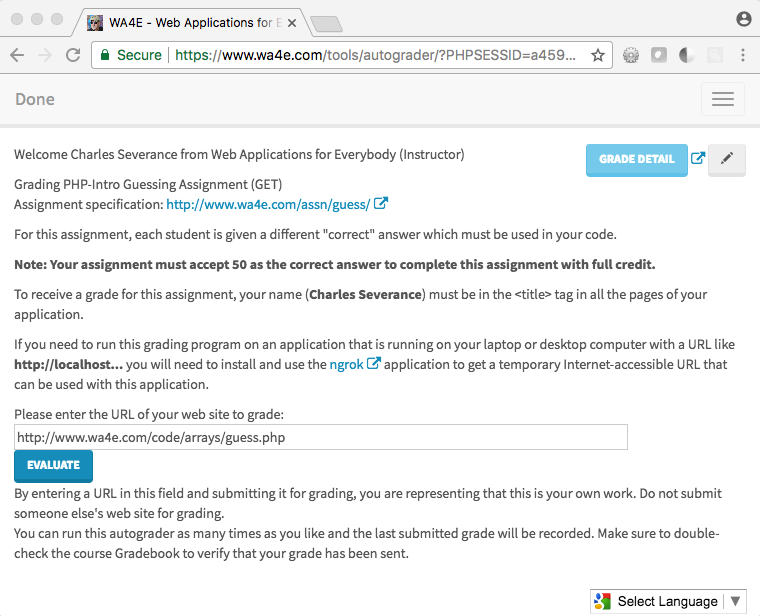

Running autograder locally: step-by-step

Follow this sequence when you test the autograder in laptop to avoid missing steps.

- Recreate environment: load the virtualenv or start the Docker container.

- Place a sample submission in the expected input path or create a zip file.

- Run the grader command used in production.

- Capture logs, the grade report, and any output files.

- Compare results to expected outputs automatically with scripts.

Example command flow:

- Activate environment.

- python grader.py –input submissions/test1 –output results/test1

- cat results/test1/report.json

Automate these steps using a script to speed repetitive tests.

Source: codegrade.com

Debugging failed runs

When a test fails, isolate the problem fast. Good logs and reproducible inputs are your best tools.

Debug checklist:

- Re-run the failing sample with verbose logging.

- Check environment differences: Python versions, package versions, OS-level libs.

- Run the student code manually to see runtime errors.

- Use unit tests to isolate which grading function failed.

Common causes of failure:

- Missing dependencies or wrong package versions.

- Timeouts or infinite loops in student code.

- Differences between the sandbox and local environment.

Source: examind.io

Automating tests and CI integration

Automating how to test the autograder in a laptop scales your checks. Continuous integration (CI) catches regressions and enforces quality.

Set up CI:

- Add a test job that spins up your grading environment (Docker recommended).

- Run unit and integration tests on each pull request.

- Fail the build for regressions and attach logs for reviewers.

- Schedule nightly full-grader runs with larger sample sets.

Benefits:

- Early detection of bugs.

- Clear audit trail for changes.

- Easier collaboration across instructors and TAs.

Source: insidehighered.com

Performance, security, and limitations

Testing performance and security locally helps you understand how the autograder behaves under load. But local tests have limits compared to real servers.

Performance checks:

- Measure average runtime and peak memory on representative submissions.

- Test rate limits by running many submissions in parallel.

Security checks:

- Run untrusted code inside containers or sandboxes.

- Limit filesystem and network access for student code.

- Use timeouts and resource quotas.

Limitations:

- Laptop hardware differs from production servers.

- Network-dependent features may behave differently.

- Always run final verification on staging or production-like systems.

Source: indigoresearch.org

Personal experience and lessons learned

I once graded a class of 300 students and found a token-checking bug two days before grades were due. Local tests caught it quickly and saved hours. From that project, I learned to always include malformed inputs and to keep a small set of “real student” fixtures.

Practical tips from my work:

- Keep a minimal failing example for each bug.

- Teach TAs how to run local tests; share environment files.

- Log structured results to speed triage.

Mistakes to avoid:

- Relying only on one machine for tests.

- Running tests without versioning the environment.

- Skipping security tests because they seem slow.

Frequently Asked Questions on how to test the autograder in laptop

How do I replicate the grader environment on my laptop?

Create a Docker container or a virtual environment with the same OS packages and Python/node versions. Save configuration files so others can reproduce it.

What is the fastest way to test many submissions?

Write a small script to loop over sample submission folders or zipped files and run the grader in batch mode. Use parallelism carefully if your laptop can handle it.

Can I debug student code inside the autograder?

Yes. Capture student code output and run it under a debugger inside a safe environment. Never run untrusted code without sandboxing.

How do I handle different programming languages?

Install language runtimes and use language-specific test harnesses. Containerize each language environment for reproducibility.

When should I move tests to CI?

Move tests to CI after local runs are stable, and you want automated regression checks on every code change. Use CI for repeatable, shared validation.

Conclusion

Testing an autograder on your laptop is the fastest way to validate logic, catch errors, and build confidence before grading runs. Follow repeatable environment setup, create diverse test fixtures, automate checks with scripts and CI, and always sandbox untrusted code. Start small, add realistic student samples, and iterate based on failures. Try one graded assignment locally this week, automate its tests, and share your setup with the team to avoid last-minute surprises. Leave a comment with your toughest autograder bug or subscribe for a checklist to get started.